How to scrape Indeed in 2026? One of the best protected websites in the world

Indeed deploys a full arsenal against scraping: Cloudflare, TLS fingerprinting, dynamic tokens, behavioral detection. Learn how to scrape it with a lightweight script, no headless browser needed.

Disclaimer: This article is published for educational and research purposes only. Please respect the Terms of Service (ToS) and the rules of each website before performing any data extraction.

Indeed is one of the best protected websites against scraping.

- Cloudflare

- TLS Fingerprinting

- Dynamic pagination tokens

- Behavioral detection

Indeed.fr deploys a complete arsenal. I'll teach you how to scrape this site with a lightweight script, without using expensive and unreliable headless browsers. Want to find out how it works? Let's go!

The full source code is available on GitHub.

The overall architecture

The scraper is a single script (scrape_indeed.py) that relies on two dependencies:

pip install curl_cffi lxml

- curl_cffi -- a Python binding for curl that enables TLS impersonation of real browsers

- lxml -- HTML parsing to extract structured data from pages

The flow is simple: load the results page, extract job listings as JSON, then visit each listing individually via an "embedded" URL to enrich the data.

curl_cffi Session (Chrome TLS)

|

v

GET listing (SERP) --> parse Mosaic/Legacy --> JSON job listings

|

v

For each job:

GET viewjob?viewtype=embedded --> parse JSON/JSON-LD --> enrich listing

|

v

Full JSON output + optional export

curl_cffi: the main weapon

Why not requests?

The fundamental problem with scraping in 2026 is TLS fingerprinting. When a browser establishes an HTTPS connection, the TLS ClientHello contains a unique signature:

- The order of cipher suites

- Supported TLS extensions

- Elliptic curves

- ALPN support (HTTP/2)

- The order of everything

This signature is called a JA3 fingerprint (or JA4 in its newer version). Python requests uses the default OpenSSL TLS stack, which has a signature that's instantly recognizable as "not a real browser".

How curl_cffi solves the problem

curl_cffi (4900+ stars on GitHub) is a binding for curl-impersonate, a curl fork that faithfully reproduces the TLS handshake of real browsers. In one line:

from curl_cffi import requests

session = requests.Session(impersonate="chrome")

In the script, that's exactly what we do:

IMPERSONATE = "chrome"

def create_session(proxy_url: str | None = None) -> requests.Session:

session = requests.Session(impersonate=IMPERSONATE)

if proxy_url:

session.proxies = {"http": proxy_url, "https": proxy_url}

return session

The "chrome" profile without a version number automatically uses the latest Chrome version available in curl_cffi (currently Chrome 142). You can also pin a specific version with "chrome124" or "chrome131" for example. Each request produces a TLS handshake identical to Chrome's: same cipher suites, same order, same extensions, same ALPN.

Available profiles

curl_cffi supports dozens of profiles:

| Browser | Available versions |

|---|---|

| Chrome | 99, 100, 101, 104, 107, 110, 116, 119, 120, 123, 124, 131, 133a, 136, 142 |

| Firefox | 133, 135, 144 |

| Safari | 15.3, 15.5, 17.0, 18.0, 18.4, 26.0 |

| Edge | 99, 101 |

| Tor | 145 |

Each profile faithfully reproduces the TLS specifics of the target browser down to the version.

HTTP headers: consistency with impersonation

TLS impersonation isn't enough. HTTP headers must be consistent with the simulated browser. Indeed specifically checks Sec-Fetch headers.

Headers for the listing page (SERP)

LISTING_HEADERS = {

"User-Agent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10.15; rv:148.0) "

"Gecko/20100101 Firefox/148.0",

"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

"Accept-Language": "fr,fr-FR;q=0.9,en-US;q=0.8,en;q=0.7",

"Alt-Used": "fr.indeed.com",

"Connection": "keep-alive",

"Upgrade-Insecure-Requests": "1",

"Sec-Fetch-Dest": "document",

"Sec-Fetch-Mode": "navigate",

"Sec-Fetch-Site": "same-origin",

"Sec-Fetch-User": "?1",

"Priority": "u=0, i",

"Pragma": "no-cache",

"Cache-Control": "no-cache",

}

Why these headers matter

Sec-Fetch-* : These are headers that real browsers send automatically. They indicate the request context:

Sec-Fetch-Site: same-origin= intra-site navigation (not an external API call)Sec-Fetch-Mode: navigate= user navigation (not a JS fetch)Sec-Fetch-Dest: document= requesting an HTML documentSec-Fetch-User: ?1= the request is user-initiated (click)

A script without these headers is immediately identifiable.

Accept-Language: fr,fr-FR;q=0.9,en-US;q=0.8,en;q=0.7 is consistent with a French user on fr.indeed.com. A standalone en-US would be suspicious.

Referer: The referer is dynamically computed from the search URL:

headers = dict(LISTING_HEADERS)

parsed = urlparse(url)

headers["Referer"] = f"{parsed.scheme}://{parsed.netloc}/jobs"

Headers for detail pages (job listings)

DETAIL_HEADERS = {

"User-Agent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10.15; rv:148.0) "

"Gecko/20100101 Firefox/148.0",

"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

"Accept-Language": "fr,fr-FR;q=0.9,en-US;q=0.8,en;q=0.7",

}

The headers are lighter for detail pages -- matching what the browser actually does. The referer points to the job URL in the listing:

headers = dict(DETAIL_HEADERS)

headers["Referer"] = job.get("url", "https://fr.indeed.com/jobs")

The proxy

The script accepts a proxy via the PROXY_URL environment variable:

PROXY_URL="http://user:pass@host:port" python scrape_indeed.py

This is configured at session creation:

if proxy_url:

session.proxies = {"http": proxy_url, "https": proxy_url}

Without a proxy, the script works but Indeed will likely block after a few requests. Residential proxy providers (Decodo/Smartproxy, Bright Data, etc.) are recommended -- datacenter proxies are detected more easily.

Bot detection

Indeed uses Cloudflare for protection. Without pagination (first page only), blocking typically manifests as a 403 with a Cloudflare captcha. In this case, two options:

- Switch proxy -- a new residential IP is often enough to get through

- Use a captcha solving service like CapSolver with their

AntiCloudflareTask, which solves the challenge and returnscf_clearancecookies to inject into the session

We won't cover this part here.

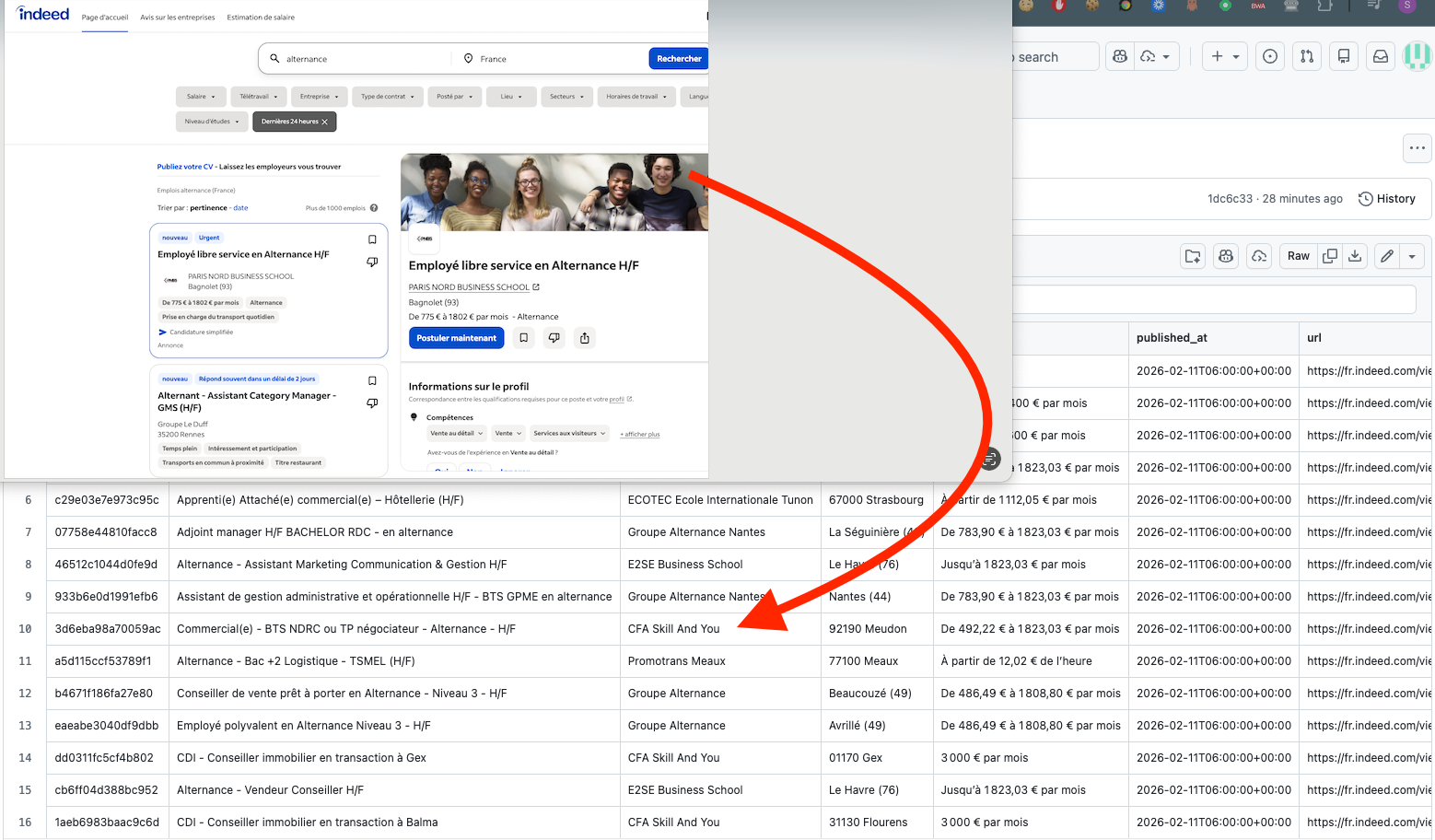

Pagination: Complete shutdown since February 2026

Indeed has now completely blocked pagination for non-logged-in users. Pagination tokens (pp) are still present in the response (in pageLinks), but they no longer work without an authenticated session: any attempt to use them or manually increment the start parameter redirects to the login page.

This is a major change from 2024 when these same pp tokens worked in anonymous mode. Today, Indeed requires login as a prerequisite for any navigation beyond the first page of results.

The standalone script therefore scrapes the first page of results (typically 15 listings), then enriches each one via its detail page.

At crawlergrid.ai, we handle all types of data extraction and automation for you, with no pagination limits or captcha blocking.

Parsing

Listing page: the Mosaic format

Indeed uses a "Mosaic" architecture (2026) that exposes data via JavaScript assignments:

window.mosaic.providerData["mosaic-provider-jobcards"] = {...};

The scraper locates these blocks by regex, then extracts JSON by counting braces (blocks can be hundreds of KB on a single line, a naive json.loads would fail). Job listings are in the mosaic-provider-jobcards provider, the total count in MosaicProviderRichSearchDaemon.

A legacy fallback is also supported for older pages (<script id="comp-initialData">).

Detail page: the embedded format

Each listing is loaded via viewtype=embedded, which returns pure JSON instead of HTML:

detail_url = (

f"https://fr.indeed.com/viewjob?viewtype=embedded"

f"&jk={jk}&from=shareddesktop_copy&adid=0&spa=1&hidecmpheader=1"

)

The JSON is deeply nested -- each field (company, salary, contract, description) is extracted with multiple fallback paths. If the embedded JSON fails, the script falls back to the JobPosting JSON-LD (schema.org) present in the HTML.

Listing + detail merge

Listing data (title, company, location) is enriched with detail data (description, contract, full salary).

Request timing

Timing is crucial to avoid detection and behave like a regular user:

# Between detail pages (job listings)

delay = random.uniform(2, 4)

time.sleep(delay)

Usage and output

Commands

# Without proxy (risk of blocking)

python scrape_indeed.py

# With proxy (recommended)

PROXY_URL="http://user:pass@host:port" python scrape_indeed.py

# Custom search, limit to 5 listings

python scrape_indeed.py --url "https://fr.indeed.com/jobs?q=python&l=Paris" --max 5

# Listing only (no detail page loading)

python scrape_indeed.py --no-detail

# Export results as JSON

python scrape_indeed.py --json-output

What the script displays

The script displays each pipeline step with structured, colored logs. Each phase is timestamped:

======================================================================

Indeed Scraper -- curl_cffi + Chrome TLS Impersonation

======================================================================

[14:32:01] Creating curl_cffi session

> TLS impersonation: chrome

!! No proxy configured -- high risk of blocking

[14:32:01] Loading results page (SERP)

> URL: https://fr.indeed.com/jobs?q=alternance&l=France&sort=date...

> HTTP 200 -- 847,231 bytes

OK Page received, parsing in progress...

OK Format detected: Mosaic (2026+)

> Providers found: mosaic-provider-jobcards, MosaicProviderRichSearchDaemon, ...

OK 15 listings extracted from this page (Indeed total: 2847)

Right after parsing the listing, the extracted JSON for each job is displayed:

[14:32:02] JSON extracted from listing (15 jobs)

----------------------------------------------------------------------

--- Job #1 -- Web Developer Apprenticeship ---

{

"job_key": "abc123def456",

"title": "Web Developer Apprenticeship",

"company": "TechCorp",

"location": "Paris (75)",

"salary": "1,200 EUR per month",

"published_at": "2026-02-10T14:00:00+00:00",

"url": "https://fr.indeed.com/viewjob?jk=abc123def456"

}

Then for each detailed listing, the script shows progress and enriched fields:

[14:32:03] Loading details (5 jobs)

> [1/5] Detail for: Web Developer Apprenticeship (jk=abc123def456)

OK Enriched fields: company, location, description, contract_type, published_at

> Pause 2.7s...

> [2/5] Detail for: Sales Assistant (jk=xyz789...)

OK Enriched fields: company, location, salary, description, published_at

At the end, a compact summary followed by the full enriched JSON (listing + detail merged):

[14:32:18] Final results (5 jobs)

#1 Web Developer Apprenticeship @ TechCorp -- Paris (75) | 1,200 EUR per month

#2 Sales Assistant @ SARL Dupont -- Lyon 69001

...

[14:32:18] Full enriched JSON

----------------------------------------------------------------------

--- Job #1 -- Web Developer Apprenticeship ---

{

"job_key": "abc123def456",

"title": "Web Developer Apprenticeship",

"company": "TechCorp",

"location": "Paris 75001",

"salary": "1,200 EUR per month",

"contract_type": "Apprenticeship",

"published_at": "2026-02-10T14:00:00+00:00",

"url": "https://fr.indeed.com/viewjob?jk=abc123def456",

"description": "We are looking for a web developer apprentice... (1847 chars)"

}

Long descriptions are truncated in display (200 characters) but kept in full in the --json-output export.

Technical summary

| Layer | Implementation | Role |

|---|---|---|

| TLS | curl_cffi + impersonate="chrome" | TLS fingerprint identical to Chrome |

| HTTP | Sec-Fetch-* headers, Accept-Language, dynamic Referer | Application consistency |

| Network | Single proxy via PROXY_URL | IP rotation (manual) |

| Anti-bot | Redirect detection secure.indeed.com/auth | Blocking diagnosis |

| Pagination | First page only (login required since 2026) | Known limitation |

| Listing parsing | Mosaic providers + legacy fallback comp-initialData | Multi-format support |

| Detail parsing | Embedded JSON + JSON-LD JobPosting fallback | Multi-format support |

| Extraction | dict_get multi-path + per-field fallbacks | Resilience to variations |

| Timing | Random 2-4s delays between details | Human-like behavior |

| Output | Colored logs + JSON pretty-print + --json-output export | Traceability and debug |

The key is consistency across all these layers. The TLS fingerprint alone isn't enough if the HTTP headers are inconsistent. Good headers aren't enough if the IP is blacklisted. And since 2026, even with all that, pagination remains a challenge -- crawlergrid.ai handles all these constraints in production, reliably and sustainably.